Seven of the published autonomic sleep articles on this site reference wearable data — heart rate variability (HRV), REM percentage, heart rate patterns. Millions of people check Oura, WHOOP, or Apple Watch sleep data every morning. When the tracker says REM was low or deep sleep was short, the next question is whether that number reflects brain activity or a measurement artifact.

This article covers what wearables measure versus what polysomnography measures, which sleep metrics to trust, device-specific accuracy data from published validation studies, and when tracker data might point to a sleep problem versus orthosomnia (tracker-induced sleep anxiety). It does not cover the full autonomic overview — for that, see Autonomic Sleep Disruption: What It Is, How It Fragments Sleep, and How to Recognize It. Accurate measurement is the first step in identifying which autonomic factors might be disrupting sleep; the pillar article covers the broader picture.

What Do Sleep Trackers Measure Compared to Polysomnography (PSG)?

Polysomnography (PSG) — the reference standard for sleep measurement — records brain activity directly. Electroencephalography (EEG) electrodes detect the slow waves that define N3 (deep sleep), the theta rhythms and sleep spindles of N2 (light sleep), and the mixed-frequency low-voltage activity of REM. Electrooculography (EOG) detects the rapid eye movements that give REM its name. Electromyography (EMG) detects the muscle atonia (loss of voluntary muscle tone) that distinguishes REM from wakefulness. Each 30-second epoch of the night is scored into a stage based on these brain-wave and muscle-activity patterns.

Consumer wearables measure none of those inputs. Instead, they use accelerometers (detecting motion and stillness) and photoplethysmography (PPG — shining light through skin to measure pulse rate and heart rate variability). Some devices add skin temperature sensors. Algorithms — proprietary and different across brands — translate these indirect measurements into sleep stage estimates. Reduced movement combined with lower heart rate and increased HRV is classified as probable deep sleep. Increased heart rate variability with irregular breathing patterns is classified as probable REM.

The distinction matters because the mapping from heart rate patterns to brain-defined sleep stages is imprecise. A 2024 scoping review by Birrer et al. analyzed 35 studies evaluating 62 wearable device setups against PSG (Birrer et al., 2024). Accelerometer-only devices averaged 86.7% accuracy for binary sleep/wake detection. Adding PPG raised that to 87.8% — a difference that was not statistically meaningful. For multi-stage classification (wake, light, deep, REM), accuracy dropped to 65.2% with kappa values of 0.52-0.58. The Somfit device, which uses a forehead-mounted EEG sensor in addition to accelerometry and PPG, achieved the highest kappa (0.52 in the six-device comparison), demonstrating that brain-wave sensing remains the accuracy ceiling for sleep staging.

When a tracker reports “REM was 45 minutes,” it is estimating from heart rate and movement patterns — not measuring brain activity. That estimate may be close to the true value or off by 20 minutes or more, depending on the device and the night.

How Accurate Are Oura Ring, WHOOP, and Apple Watch for Sleep Stage Classification?

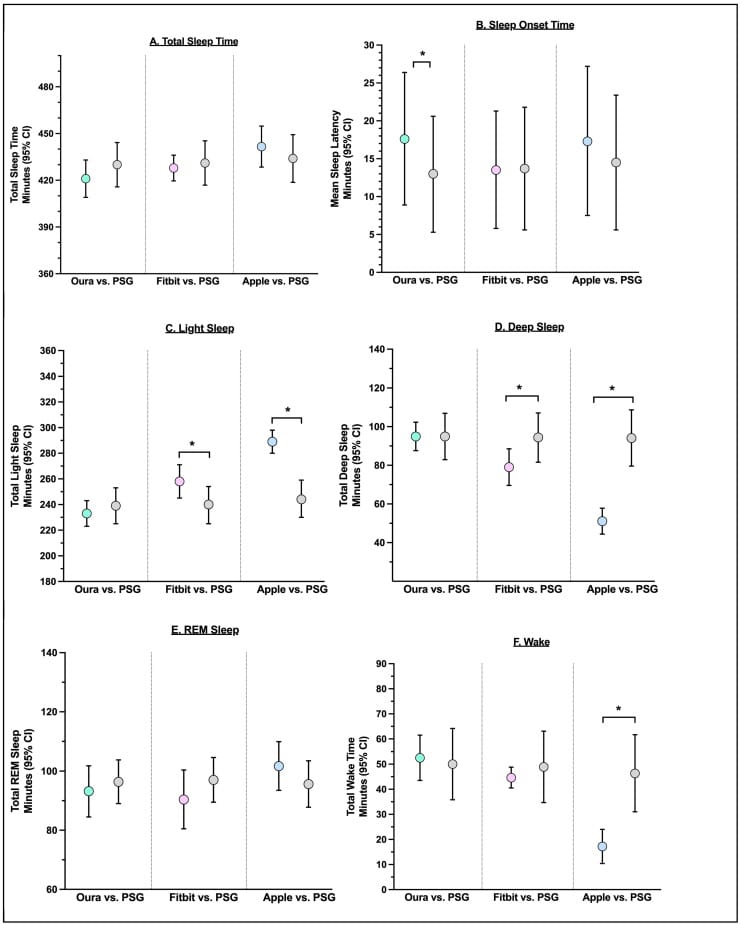

The largest head-to-head comparison of current-generation devices comes from Robbins et al. (2024), who tested Oura Ring Gen3, Fitbit Sense 2, and Apple Watch Series 8 simultaneously against PSG in 35 healthy adults aged 20-50. All three devices achieved 91-93% agreement with PSG for binary sleep/wake detection and greater than 95% sensitivity for identifying sleep epochs.

Sleep stage classification revealed larger differences between devices. Oura Ring Gen3 achieved 76.0-79.5% sensitivity and 77.0-79.5% precision across stages, with no statistically significant difference from PSG on seven of eight sleep metrics examined. It showed negligible proportional bias — meaning its errors did not increase or decrease with longer or shorter sleep. Fitbit Sense 2 achieved 61.7-78.0% sensitivity, overestimated light sleep by 18 minutes, and underestimated deep sleep by 15 minutes. Apple Watch Series 8 had the widest performance range (50.5-86.1% sensitivity across stages), underestimated deep sleep by 43 minutes, and overestimated light sleep by 45 minutes.

An earlier six-device comparison by Miller et al. (2022) tested Oura Ring Gen2 and WHOOP 3.0 alongside Apple Watch S6, Garmin Forerunner 245, Polar Vantage V, and Somfit in 53 healthy adults. All devices achieved 86-89% agreement for binary sleep/wake, but only 50-65% for multi-stage classification. Oura Gen2 reached 89% for sleep/wake and 61% for multi-stage (kappa 0.43). WHOOP 3.0 reached 86% for sleep/wake and 60% for multi-stage (kappa 0.40).

A 2025 study by Schyvens et al. tested six wrist-worn devices (including WHOOP 4.0, Apple Watch Series 8, and two Fitbit models) simultaneously against PSG in 62 adults with a mean age of 46. All devices showed greater than 90% sleep sensitivity but only 29-52% wake specificity — confirming that wearables are better at detecting sleep than detecting wakefulness within a sleep period. Apple Watch Series 8 achieved the highest overall epoch-level kappa (0.53). All devices underestimated wake-after-sleep-onset by 12-48 minutes and overestimated sleep efficiency by 2-10%.

The consistent pattern across studies: devices perform better at detecting deep sleep and REM than light sleep or wake, because deeper sleep stages produce more distinctive heart rate and movement patterns.

Which Sleep Tracker Metrics Are Reliable Enough to Act On?

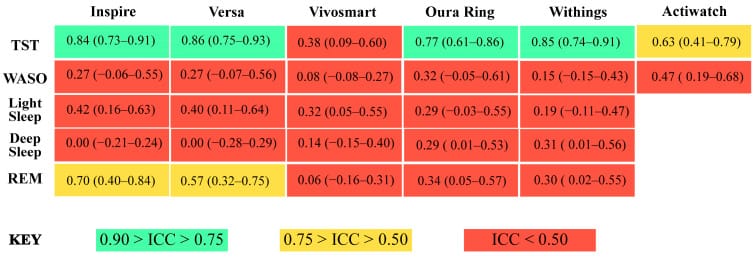

Kainec et al. (2024) conducted a three-way comparison — five consumer devices versus research-grade actigraphy versus PSG — in 50 healthy young adults. This design allows benchmarking consumer wearables against both the reference standard (PSG) and the established research tool (actigraphy) simultaneously.

Total sleep time was the best-performing metric, with intraclass correlation coefficients (ICC) of 0.77-0.86 across devices — classified as “good” agreement. Sleep efficiency (the percentage of time in bed spent asleep) was moderately reliable. Heart rate and heart rate variability (HRV) were generally accurate across devices in the Miller et al. (2022) six-device study. Beyond these, accuracy dropped sharply.

Wake-after-sleep-onset (WASO) was the worst metric across all devices: ICC values below 0.50, with mean absolute percent error ranging from 59.3% to 138.5%. A tracker reporting 30 minutes of wake time during the night could be off by 18 to 42 minutes in either direction. Deep sleep duration had ICC values of 0.00-0.31 — poor to moderate. REM sleep mean absolute percent error exceeded 20% for all five devices.

Lee et al. (2023) tested 11 devices spanning wearable, nearable (bedside), and under-mattress categories across 75 participants and 543 PSG hours. Macro F1 scores ranged from 0.26 to 0.69 — high variance across the consumer market. The top-performing device for staging was SleepRoutine (an under-mattress tracker, F1 = 0.69), followed by Amazon Halo Rise (a nearable device, F1 = 0.62). Wearables as a class tended to overestimate sleep efficiency. Nearable devices overestimated sleep latency by approximately 29 minutes on average.

The practical takeaway is a reliability hierarchy, ranked from highest to lowest: (1) total sleep time — good across devices, (2) sleep efficiency — moderately reliable, (3) heart rate and HRV — generally accurate, (4) sleep stage percentages — moderate at best, (5) wake-after-sleep-onset — unreliable.

Single-night readings contain too much measurement error for useful interpretation of sleep stages. Week-over-week or month-over-month trends in the reliable metrics — total sleep time, resting heart rate, HRV — carry more information than any individual night’s deep sleep or REM percentage.

Can Sleep Tracker Data Cause More Harm Than Good?

Baron et al. (2017) coined the term orthosomnia to describe individuals who became preoccupied with achieving the sleep scores their wearable devices reported. Behaviors included spending more time in bed than needed (which can worsen insomnia by weakening the association between bed and sleep), avoiding evening exercise or social events to protect sleep scores, and developing anxiety about low numbers that may not correspond to poor sleep quality.

The validation data supports this concern. Users who run two or three devices simultaneously often see different stage numbers from the same night — a pattern consistent with the kappa values of 0.20-0.53 across devices in the published validation studies. When two trackers disagree on whether deep sleep was 45 minutes or 80 minutes, neither number is necessarily wrong, but both are imprecise estimates of a value that can only be measured by electroencephalography (EEG).

One often-cited comparison: Miller et al. (2020) found that WHOOP achieved 64% four-stage agreement with PSG, while trained human PSG technicians agree with each other approximately 83% of the time. The reference standard itself has variability. Wearable accuracy exists on a spectrum rather than as a binary yes/no against a fixed truth.

The Birrer et al. (2024) scoping review flagged a gap in external validity: only 2 of 35 studies included participants with an average age above 50. Sleep architecture changes with aging — slow-wave sleep declines, sleep becomes more fragmented, and heart rate patterns change. The validation data that informs how accurate your tracker is comes predominantly from young, healthy adults — not the population that tends to have the sleep concerns driving tracker purchases.

When to take tracker data as informative versus when to set it aside: consistent multi-week trends in total sleep time or resting heart rate carry weight. A single night showing 12 minutes of deep sleep does not — that number falls within the error range documented across every validation study.

Sleep tracker data can identify patterns, but a low sleep score does not tell you why your sleep is disrupted. Autonomic dysregulation, metabolic changes, hormonal changes, and inflammatory processes can all fragment sleep in ways a wearable cannot distinguish. Identifying which causes might be involved is a useful next step beyond the numbers.

Find out which causes might be driving your 3am wakeups →

Frequently Asked Questions

Can a Sleep Tracker Identify Sleep Apnea or Insomnia?

The distinction between screening and confirmation matters here. A wearable that flags repeated blood oxygen desaturations during the night may be detecting a pattern consistent with obstructive sleep apnea, but it may also be responding to sensor positioning errors, motion artifacts, or normal physiological variation. The 2023 eleven-device study by Lee et al. found that no consumer device category reliably detected the wake fragmentation patterns characteristic of sleep disorders (Lee et al., 2023). The FDA has not cleared any consumer wearable for sleep disorder identification. These devices are wellness products — they can prompt a conversation with a physician, but they cannot replace the respiratory, EEG, and EMG data that defines sleep-disordered breathing.

How Does the Oura Ring Compare to a Sleep Study?

The Robbins et al. (2024) data showed Oura Ring Gen3 was the only device matching PSG on seven of eight metrics examined, with negligible proportional bias. The authors attributed this in part to Oura’s use of skin temperature and continuous heart rate monitoring for circadian rhythm estimation, providing additional data inputs beyond accelerometry and photoplethysmography (PPG) alone. Comparing the Gen2 data from Miller et al. (2022) — 61% multi-stage agreement — to the Gen3 data from Robbins et al. (2024) — 76-80% sensitivity — suggests firmware and hardware improvements are closing the accuracy gap over device generations. A sleep study remains the only way to get brain-wave-verified sleep staging and respiratory monitoring.

Should You Trust Your Sleep Tracker’s Rapid Eye Movement (REM) Numbers?

REM sleep produces distinctive physiological patterns that wearables can partially detect: heart rate becomes more variable, breathing becomes irregular, and body movement decreases (due to the muscle atonia that characterizes REM). These patterns are more distinctive than the subtle differences between N1 and N2 light sleep, which is why wearables detect REM more accurately than light sleep (Schyvens et al., 2025). The Kainec et al. (2024) data showed REM mean absolute percent error above 20% for all five devices — meaning if PSG measured 90 minutes of REM, any given device could report anywhere from 72 to 108 minutes. For tracking whether REM trends are stable, increasing, or declining over weeks, that level of precision may be informative. For deciding whether last night’s REM was adequate, it is not.

Which Consumer Sleep Tracker Has the Highest Validation Accuracy?

The answer depends on which metric matters. For sleep stage classification, the Oura Ring Gen3 leads among ring and wrist devices in the Robbins et al. (2024) data. For overall epoch-level agreement, Apple Watch Series 8 achieved the highest kappa (0.53) in the Schyvens et al. (2025) six-device study. For staging accuracy across device categories, the under-mattress SleepRoutine tracker outperformed all wrist wearables in the Lee et al. (2023) 11-device study (macro F1 = 0.69 versus the best wrist device at F1 = 0.57). Device selection should match the parameter of interest — and firmware updates change accuracy over time, meaning validation data from one device generation may not apply to the next.

Related Reading:

- Autonomic Sleep Disruption: What It Is, How It Fragments Sleep, and How to Recognize It — how autonomic imbalance disrupts sleep architecture

- Can Your Nervous System Get Stuck in Fight or Flight and Ruin Your Sleep? — the self-reinforcing cortisol-sleep loop

- What Is Hyperarousal Insomnia? Why You’re Wired but Tired Every Night — autonomic mechanics behind the wired-but-tired state

- Why Won’t Your Brain Shut Off at Night? The Autonomic Connection — GABA receptor impairment and sympathetic overactivation

- Why Is Your Rapid Eye Movement Sleep Fragmented? The Brainstem Switch That Controls It — the brainstem circuit and cholinergic pathway

- Does Benadryl Destroy Your Sleep? How Anticholinergic Drugs Suppress REM — anticholinergic medications and REM suppression

- What Your Overnight Heart Rate Variability Is Telling You About Your Sleep: The Vagal Tone Connection — HRV as a window into parasympathetic recovery

- How Your Gut Talks to Your Brain Through the Vagus Nerve — and Why It Matters for Sleep — gut-vagus-brain pathway

- Do Antidepressants Suppress Rapid Eye Movement Sleep? What SSRIs, SNRIs, and Tricyclics Do to Sleep Architecture — serotonergic REM suppression from medications

- Can Vagus Nerve Stimulation Devices Improve Insomnia? What the Research Shows — taVNS device evidence for insomnia

- Is Your Insomnia a Nervous System Problem? How to Tell the Difference — differentiating autonomic insomnia from other types

- Do Antidepressants Suppress Rapid Eye Movement Sleep? — SSRIs, SNRIs, and tricyclics affect sleep architecture

- Can Vagus Nerve Stimulation Devices Improve Insomnia? — clinical trial evidence for taVNS devices

- Is Your Insomnia a Nervous System Problem? — how to tell if autonomic dysregulation is driving sleep disruption

References

Birrer, V., Elgendi, M., Gao, R., & Menon, C. (2024). Evaluating reliability in wearable devices for sleep staging. npj Digital Medicine, 7(1), 74. https://pubmed.ncbi.nlm.nih.gov/38499793/

Kainec, K. A., Cain, J. A., Engel, C. E., Garbarine, I. C., Gorsline, A. L., Polon, S. P., Shanahan, K. G., Sprecher, K. E., & Benca, R. M. (2024). Evaluating accuracy in five commercial sleep-tracking devices compared to research-grade actigraphy and polysomnography. Sensors, 24(2), 635. https://pubmed.ncbi.nlm.nih.gov/38276327/

Lee, T., Kim, M., Kim, S. P., Hong, S., Han, B., Roh, T., Park, I., Song, Y., Lee, J., & Hong, S. (2023). Accuracy of 11 wearable, nearable, and airable consumer sleep trackers: prospective multicenter validation study. JMIR mHealth and uHealth, 11, e50983. https://pubmed.ncbi.nlm.nih.gov/37917155/

Miller, D. J., Lastella, M., Scanlan, A. T., Bellenger, C., Halson, S. L., Roach, G. D., & Sargent, C. (2020). A validation study of the WHOOP strap against polysomnography to assess sleep. Journal of Sports Sciences, 38(22), 2561-2567. https://pubmed.ncbi.nlm.nih.gov/32713257/

Miller, D. J., Sargent, C., & Roach, G. D. (2022). A validation of six wearable devices for estimating sleep, heart rate and heart rate variability in healthy adults. Sensors, 22(16), 6317. https://pubmed.ncbi.nlm.nih.gov/36016077/

Robbins, R., Quan, S. F., Engel, B. J., Buxton, O. M., Youngstedt, S. D., Hale, L., Johnson, D. A., & Grandner, M. A. (2024). Accuracy of three commercial wearable devices for sleep tracking in healthy adults. Sensors, 24(20), 6532. https://pubmed.ncbi.nlm.nih.gov/39460013/

Schyvens, A. M., Sweetman, A., Lechat, B., Reynolds, A. C., Adams, R. J., & Catcheside, P. G. (2025). A performance validation of six commercial wrist-worn wearable sleep-tracking devices for sleep stage scoring compared to polysomnography. Sleep Advances, 6(1), zpaf011. https://pubmed.ncbi.nlm.nih.gov/40303381/

Written by Kat Fu, M.S., M.S. · Last reviewed: April 2026 · 7 references cited